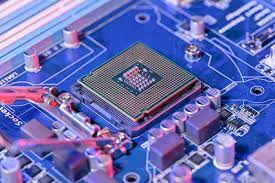

Researchers at Argonne National Laboratory are constantly exploring new ways to improve approaches to the design of semiconductor chips using artificial intelligence.

Their recently published study demonstrates several AI-based techniques for improving the atomic layer placement or ALD process. This method produces an excellent film of thick atom-like material.

This partly supported the production of computer chips, which are now in the midst of a global supply chain shortfall, which has driven up the prices of electronics of all types.

“This initiative goes far beyond the current chip shortage issues, but we are seeing longstanding semiconductor processing and manufacturing challenges,” ARNL chief materials scientist Angel Yanguas-Gil told NextCo this Thursday.

Yangon-Gil has long followed semiconductor innovations aligned with AI, pioneering a tiny neuromorphic computer chip designed by the insect brain. He explained that it was a priority for Argonne to pursue key achievements that would lead to substantial improvements in improved production. The Yangon-Gil team received funding for the housing project from, among other things, an energy industry technician, “encouraging [them] to look at the entire industry.” The lab has a strong ALD program, and their relationships with the private sector have helped them fully understand the key challenges ahead, he said.

“It focuses on the use of AI for a domestically funded research project, particularly for production that supports current research,” Yanguas-Gil said.

The problems of the new experiments are described in a recent press release from the lab.

ALDs can be used to develop thin images for various applications. This happens inside a chemical furnace, where the “signal” or two separate chemical vapors stick to a surface and form a fine film over time. According to a lab release, the technology “excellent in cultivating precise, nano-scale images on complex, 3D surfaces,” prompting scientists to explore creating new ALD materials for the next generation. manufacture of equipment.

Early ALD procedures are incredibly difficult to develop and improve. Yanguas-Gil and the lab team therefore explored three optimization techniques: random, special systems, and Bayesian optimization.

“We can use a cooking metaphor to depict the three models,” Yanguz-Gil explains.

Bayesian optimization is a method – which tests different conditions and obtains feedback – that learns an internal model and helps it understand what the next most promising conditions are. As for cooking, there’s no information about what “cooking” means for that method – it starts with a set of ingredients and oven settings.

In contrast, expert systems build on previous types of AI, which attempt to encode some expert information about expected trends during the optimization process. The system uses those expectations or rules along with the feedback it receives after testing a new condition to determine the optimal condition. Noting the similarities in cooking, Yangvaz-Gil says, “It’s just as we said in the instructions that you should pay attention to the proportions of the main ingredients first, because you can always adjust the seasoning when cooking the dish.” Huh.”

And the basic case of random organization, he said. In doing so, they “select situations at random and hope that one of them is good.” Eventually, the computer will get closer to the goal — “but you have to be prepared to taste the most intense foods in the process,” Yangwaz-Gil says.

“The random system helps us understand how hard it is to get a good recipe – how big,” but that’s not really what the user intended. ”

Laboratory researchers analyzed three optimization techniques that could identify conditions for “high and sustainable film growth in a short period of time,” among other objectives. Includes job optimization algorithms, a simulation system, and more.Then, the AI ??tool explains them and recommends the next experiment – all without any input from humans.

AI-based techniques determine optimal time components for various simulated ALD processes. The laboratory publication confirmed that the study was “the first to show the potential for real-time still image optimization using AI”.

In Yangon-gil, adaptations with in-situ linking techniques – or analysis of the phenomenon in which they occur – appear to be “unimaginable” with machine learning methods. But he did not identify his group with significant examples used for ALD throughout the scientific literature prior to this work.

“One of the reasons for this is that I think you need a team with different types of expertise to work together: technicians, fundamentals, location properties and fabrication, machine learning specialists, modeling and simulation, equipment specialists. ,” Yanguas-Gil said. . ”

Research provides a way to accelerate the integration of new production processes. It also presents an opportunity for such strategies to help US manufacturers save time and money amidst chip development.

“The actual impact depends on whether the device manufacturer or particular fab takes these ideas and applies them to their problem,” the researchers said. “Our mission is to put the technology out there and help the industry gain new capabilities online in any way they can.”

Going forward, he and his team have several ideas on “how to take this research further.” and one of them